18 min reading time

The Artificial Road ahead: Objectifying the Discussion around AI

In our connected, digital world, artificial intelligence (AI) is no longer the stuff of science fiction. AI’s reach is far and wide, from intuitive chatbots handling customer queries to algorithms predicting market fluctuations. The promises of efficiency, productivity enhancements, and groundbreaking innovation are compelling.

But let’s take a moment and consider a less talked about aspect: the challenges and risks embedded within AI’s complex algorithms and processes. Think of AI as a multifaceted tool with enormous potential for both good and bad.

This article aims to guide you through the other side of AI, focusing on industries such as finance and creative arts, to name a few. Our journey isn’t about creating unnecessary fear or projecting a dark and dystopian future. Instead, we wish to engage in an honest conversation, backed by facts, about the potential hurdles and pitfalls that accompany AI’s many benefits.

We’ll delve into specific examples within industries, shedding light on how AI might inadvertently affect the job market, pose threats to security, or even unintentionally magnify biases. Our mission here is to ignite a balanced and conscientious discourse on the role of AI in our professional lives. The final two sections provide insights into academic findings and how the generally hyped discussion about the positive impact of AI neglects important aspects of the implementation of these systems from a company’s perspective.

Don’t get us wrong; we’re not here to quash the excitement around AI or stifle its growth. We aim to equip you, whether you’re a creative entrepreneur, finance professional, or an interested observer, with a comprehensive understanding of AI’s multifaceted nature. AI’s promise is vast, but harnessing it requires care and consideration. As we walk through various sections of this article, we’ll strive to understand the potential negatives of AI, finding ways to recognize and perhaps even alleviate these challenges.

The new era of automation! Are there also losers from the integration of AI?

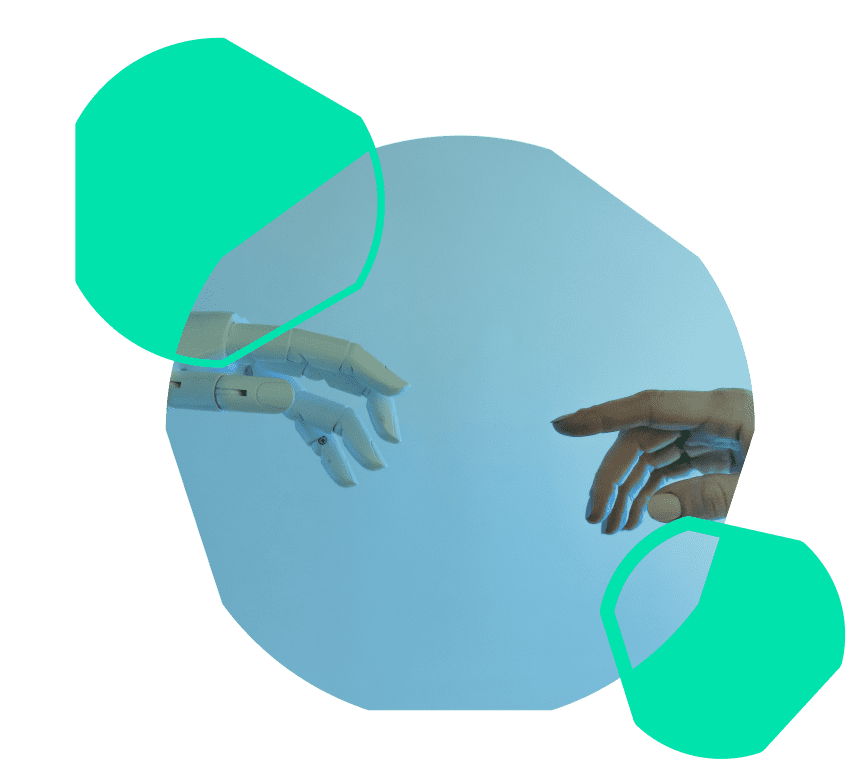

Across industries and continents, automation, fueled by AI, is transforming the business landscape. In industries such as banking and manufacturing, the application of AI is revolutionizing operations, streamlining processes, and cutting costs. This change is as immediate as it is inevitable, and it brings with it both opportunities and challenges. The impact of automation on different tasks and industries can directly be seen in the following graphic:

But what does this mean for the individual worker? While AI handles tedious tasks, enabling professionals to concentrate on more nuanced aspects of their work, it’s also true that many jobs are disappearing in its wake. From factory floors to corporate offices, the rise of automation is reshaping the workforce, and its implications are still unfolding.

The societal and economic ramifications of AI-led changes to employment are extensive and complex. Widespread automation could lead to a significant number of job losses, particularly for those in roles that are easily replaced by AI. This issue threatens to hit lower-income workers the hardest, possibly widening social and economic divides. As AI technology continues to advance, it creates a demand for new job roles that cater to the development, deployment, and maintenance of AI systems. These roles include AI engineers, data scientists, machine learning specialists, AI ethicists, and AI trainers, to name just a few. Those unable to adapt to new roles may find themselves at a disadvantage, adding to existing inequalities. A rise in automation and artificial intelligence could affect women more than men, with females having a 1.5x higher chance of needing a new occupation by 2030, according to McKinsey. This will also disproportionately affect Black and Hispanic workers. At least 12 million US workers will likely need to change jobs by 2030, with those in lower-wage positions 14x more likely to be affected. A higher percentage of working women are employed in white-collar jobs, That is exactly why the impact on the female workforce will even be larger. Some of the most AI-exposed occupations with a majority-female employee base are office and administrative support; healthcare practitioners and technical; education, training and library; healthcare support; and community and social service.

The impact AI has on employment links technology, economics, and human well-being. The opportunities and challenges are great, and the stakes are high. Finding solutions and ensuring that the opportunities presented by AI are not negated by negative impacts will require the engagement of all sectors of society. The foundation for this is a whole-of-society discourse that involves not only those at the forefront of technology but everyone who has a stake in our shared future.

Exploring the technical foundations of AI and its impact on society

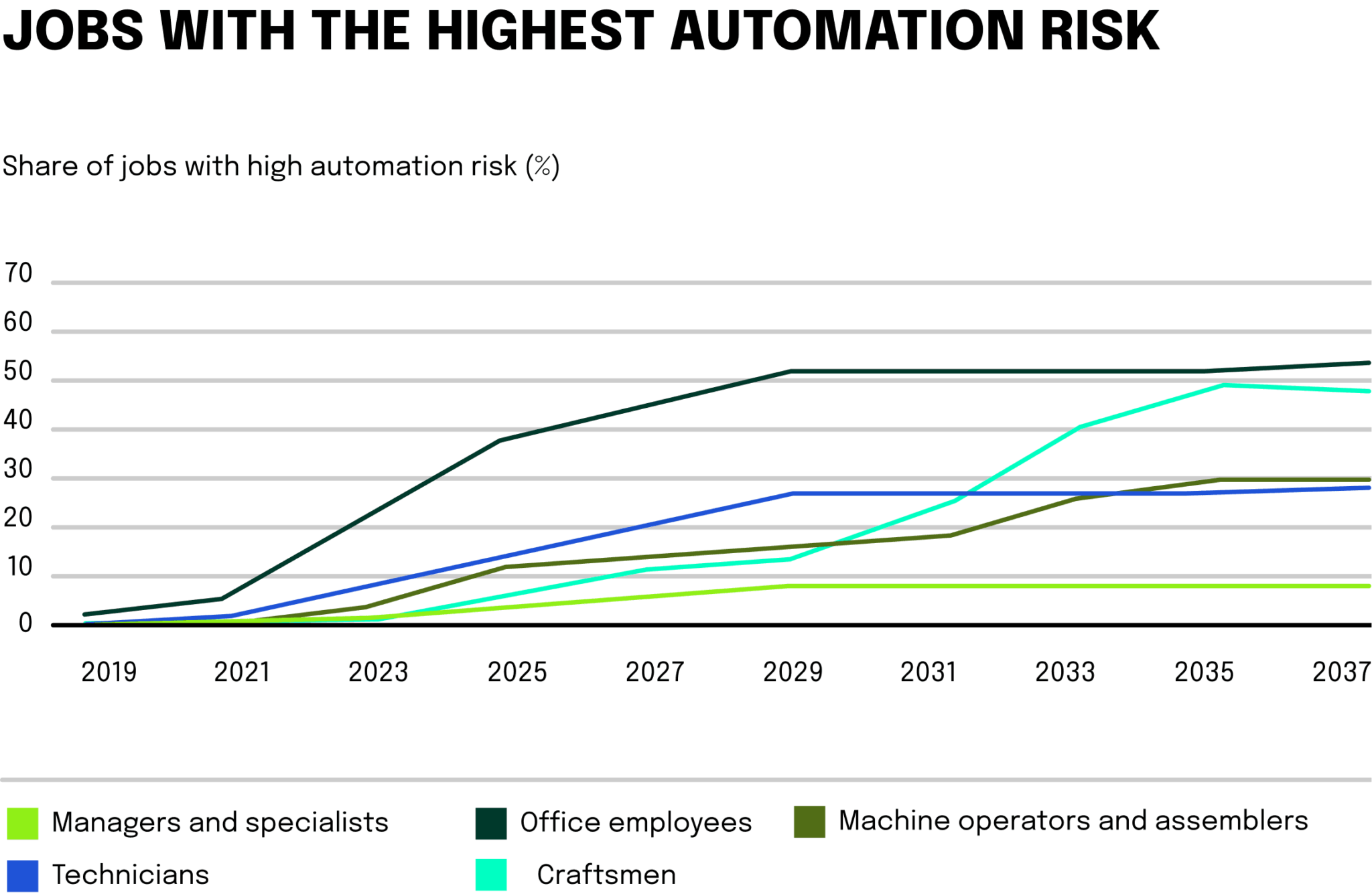

Delving into the machinery of AI, we uncover an intricate network of algorithms that learn from datasets using various methods, including supervised, unsupervised, and reinforcement learning. As you can see in the following graphic, the British Medical Journal highlighted how data, design, use, and the world interact and ultimately are sensible to AI biases.

We took the graphic as a basis to identify a few general AI biases that can be recognized in more application fields outside the medical industry. As you will shortly find out, exactly the interaction between data, design, use, and real-world application is quite sensitive to biases. For the future of reliable AI, we all need to make sure that the following four general problems are eliminated.

1. Data Selection Bias

This happens when the information used to guide AI doesn’t sufficiently mirror the population it’s aimed at. For example, if facial recognition software is created using a dataset dominated by one racial group, it will naturally be biased toward that group.

2. Over-fitting to training data

This happens when the AI model can accurately predict values from the training dataset but cannot predict new data accurately. The model adheres too much to the training dataset and does not generalize to a larger population.

3. Algorithmic Bias

Sometimes, the models themselves can harbor biases. Specific computational techniques might unintentionally focus on certain aspects, leading to unwarranted distinctions.

4. Bias Detection Systems

Ironically, AI systems developed to inspect the integrity of training data and corresponding models might also introduce biases. The tools meant to detect biases could themselves be influenced by skewed data, thereby reinforcing the issue they are meant to resolve.

The societal ripple effects of bias in AI: the real-world impact

The social implications of AI biases extend far and wide, influencing various aspects of our life. A more detailed exploration reveals:

1. Inequality

In the workplace, algorithms to screen resumes might unintentionally prefer candidates with specific names or backgrounds, reflecting past hiring trends. Research has highlighted instances where male candidates were favored for technical roles. In healthcare, disparities have been found in algorithms guiding patient care, with a tendency to prioritize white patients. Scholarly articles have shed light on significant racial prejudices in some widely-used healthcare algorithms.

2. Economic Repercussions

Unfair lending algorithms might disproportionately reject loans to deserving applicants from underprivileged areas, stifling potential economic growth.

3. Legal and Moral Dilemmas

Legal battles over AI-driven discrimination are rising, creating complex legal issues. Global regulations, like GDPR in the European Union, have also started to address algorithmic bias.

Looking ahead to eliminate AI biases

Tackling these biases necessitates strong technical measures and a multi-dimensional approach. Strategies such as fairness-aware modeling and inclusive development practices offer promising paths forward.

The task is daunting, but the importance cannot be overstated. By deeply understanding the technological nuances and actively working to diminish biases, we lay the groundwork for an AI environment that aligns with societal values. A harmonious blend of technology, ethics, and social consciousness will be instrumental in unlocking AI’s transformative power.

AI in the finance sector: a balancing act

As the world continues to navigate through a period of unparalleled technological advancement, the finance industry finds itself at a fascinating crossroads. In this intricate web of numbers, policies, and global economics, AI is emerging as both a powerful ally and a potential challenge. The integration of AI within the financial sector has unlocked a myriad of possibilities, from enhancing efficiency to creating entirely new platforms for investment.

However, with great potential comes great responsibility. In this section, we will delve into the heart of the matter, focusing on the general and technical risks associated with AI’s growing presence in the financial landscape. We will look beyond the opportunities and into the complexities and hazards, unraveling the threads that bind this cutting-edge technology to an industry that holds the keys to global economic stability.

1. Data security

Financial institutions handle vast quantities of sensitive data. AI models require extensive datasets to train on, exposing these to potential cyber threats. A breach could mean unauthorized access to critical information, including personal details and transaction history.

2. Model complexity and overfitting

AI models in finance are often highly complex. Overfitting past data might lead to models that perform poorly on unseen data. This can cause misguiding investment strategies and risk assessments.

3. Market manipulation and unintended consequences

Sophisticated AI-driven trading strategies could unintentionally cause market manipulation. Models reacting to each other in unforeseen ways might lead to market turbulence or even crashes.

AI finance case study: a technical examination of algorithmic trading

The technical risks associated with AI in finance require both an understanding of the underlying algorithms and a broader perspective on market dynamics and regulatory constraints. As AI continues to penetrate the financial industry, these concerns will necessitate thorough examination and strategic risk management. This can be seen by observeing one of the current use cases for AI in the finance industry: algorithmic trading.

High-frequency trading (HFT): HFT uses complex algorithms to trade at sub-millisecond speeds. These algorithms need constant monitoring and fine-tuning to prevent undesirable market behavior.

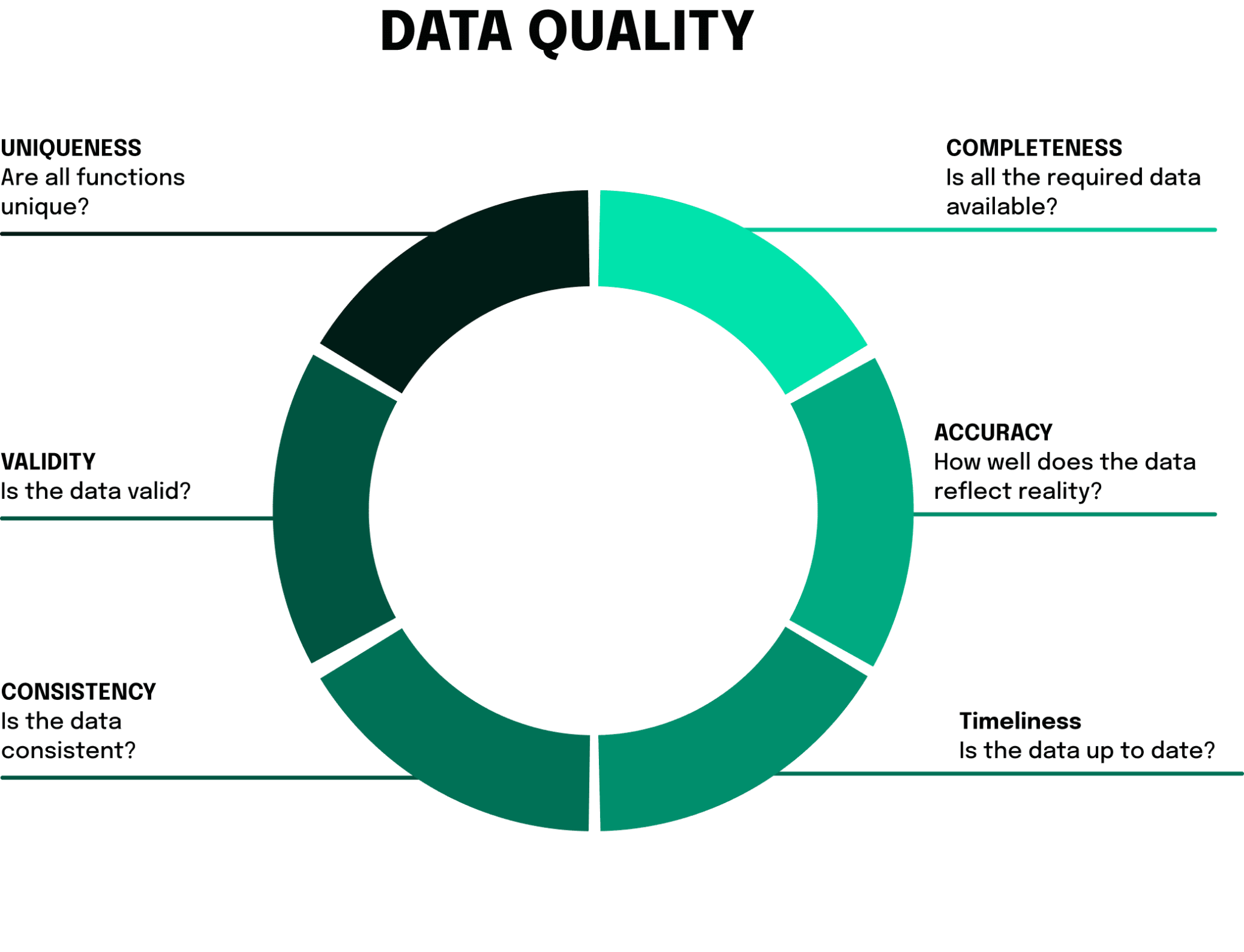

Machine learning and data quality: Algorithmic trading often relies on machine learning, requiring high-quality data for effective modeling. Noise in the data or biases in selection can lead to incorrect predictions and significant financial losses. There are some questions that developers of these trading systems need to be able to answer specifically to reduce these problems. You can find the most relevant questions in the graphic below.

Ethical and fairness considerations: The ability of large financial institutions to leverage advanced AI models might create unfair advantages over smaller players, raising ethical concerns. Especially here, there are some practices that can be implemented by financial institutions to minimize these risks. In the following, we list a small but very relevant excerpt of possible actions:

- Increase transparency: Financial institutions should be more open about how their trading algorithms work. This could be done by introducing standards that allow insight into algorithm decision-making without revealing proprietary secrets.

- Collaboration with regulators: Involving regulators in the development and monitoring of algorithms could increase confidence in the systems and ensure that they meet regulatory requirements. This point in particular is closely related to increased transparency and is an important step towards greater transparency and trust in new AI-based financial products. The EU, for example, is already working very hard on new AI laws.

- Cooperation with academic research: the importance of cooperation between universities and companies for innovation and education is widely recognized and is becoming increasingly important as competition in global markets and the race for innovation and growth intensifies. Supporting research projects aimed at developing fair and ethical trading algorithms could yield innovative solutions that advance the industry as a whole.

- Education and training: Training employees in ethical trading practices and understanding the algorithms they work with is essential. From general ethics training to AI-specific training, many offerings can already be found and implemented today.

Robustness and stress testing: AI models in finance must undergo rigorous testing to ensure they react appropriately under extreme market conditions. The inability of an algorithm to adapt to sudden market changes could have catastrophic consequences.

Risks of AI in the creative industry

In an era where imagination meets automation, the creative industry is witnessing a revolution like never before. AI is not just an auxiliary tool in the hands of artists, writers, and creators; it has become a collaborator, a co-creator, and sometimes, even a challenger. While the fusion of AI with creativity has led to innovations that were once the stuff of dreams, it also presents a new set of risks and concerns that cannot be ignored. From AI-generated content to think bubbles shaped by algorithms, the intersection of technology with artistry brings forth both marvel and apprehension. In this section, we will explore the less-illuminated side of this union, focusing on the potential risks of AI in the creative industry. Through technical explanations and critical insights, we’ll uncover the multifaceted impacts of AI on creativity, paving the way for an informed discussion on the future of art and innovation.

The power of AI to churn out content on an enormous scale has brought with it a flood of issues. These concerns take the shape of two prominent problems:

- Quality dilution: The rapid pace of AI-powered production risks eclipsing the careful thought and unique spark that defines human-made content. This overproduction can lead to a sea of materials that lack depth and integrity.

- Authenticity and originality: In crafting its works, AI often relies on pre-existing data. This method might inadvertently replicate familiar and overexploited trends, causing genuine creativity to get lost in the mix.

Think bubbles created by algorithms

Tailoring content to individual tastes, a task often handled by AI, can limit diversity and lead to isolated pockets of thought, or “think bubbles.” This phenomenon may reinforce existing biases and contribute to societal divisions. The same technology that enables personalized content can also be weaponized to steer opinions. This power comes with a risk, especially when algorithms craft messages designed to play into individual prejudices.

Technical risks: intellectual property and dependence on data

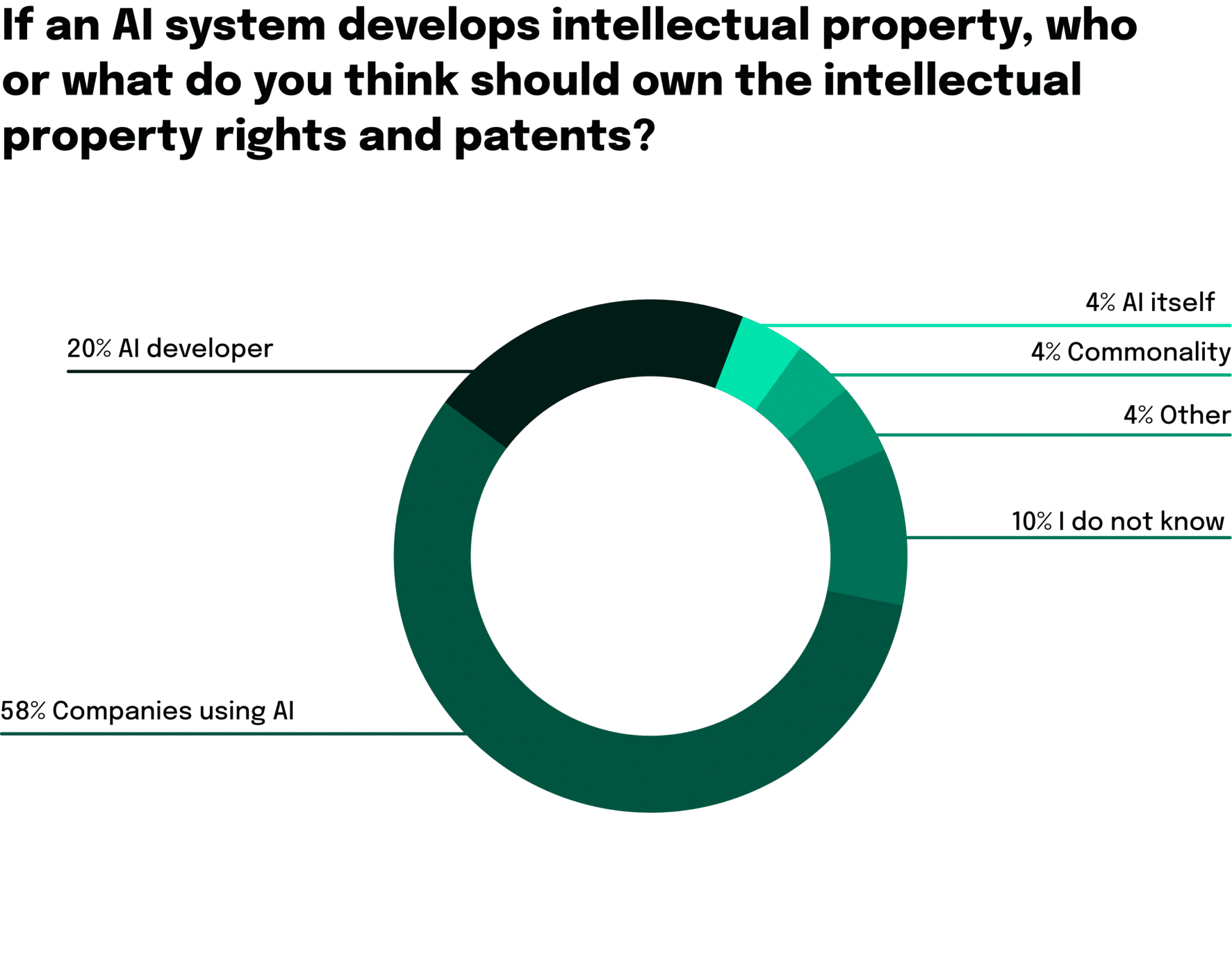

Intellectual property concerns: In the world of AI, determining who owns what, along with issues related to infringement and licensing, is a legal quagmire that continues to challenge experts. As it is visible in the following graphic, there are large disagreements in regards to the intellectual property rights and patents created by AI.

Dependence on quality data: Without properly curated data, AI’s creative efforts can go awry. Ensuring that the information feeding into these systems is accurate and unbiased is crucial to avoid inappropriate or even harmful outputs.

Algorithmic bias in creativity: Biases in data or the very structure of algorithms can affect AI’s creative decisions. Such distortions have broad societal impacts, reinforcing stereotypes or marginalizing certain perspectives.

Generative adversarial networks (GANs) and content authenticity

The sophisticated technology behind Generative Adversarial Networks (GANs) has enabled the development of highly deceptive visual forgeries. The potential misuse of Deepfakes for fraudulent or malicious purposes is a growing concern.

With the rise of AI-generated forgeries, verifying the authenticity of creative works has become a complex problem. The industry may have to rely on intricate verification systems and emerging technologies like blockchain to trace and confirm content’s origin.

The complex interplay between AI and creativity, while promising in many ways, also brings with it a multitude of challenges. From quality control to legal obstacles, understanding and navigating these risks requires a delicate balance of innovation and caution.

Our final thoughts about the negative impact of AI

We have highlighted key challenges around AI in previous sections. Our journey began with a look at how AI is reshaping the landscape of work – opportunities and key social and economic drivers included.

We analyzed how hidden biases in algorithms and data can have unintended social consequences. Our look at the financial sector painted a nuanced picture and underscored the need for careful consideration of new AI tools. In the creative sector, we explored the dangers associated with an overabundance of AI-generated content and thought isolation created by machine-driven algorithms.

At neosfer, we are engaged in research and development of products and services that lead to a sustainable transformation of financial services. We see AI as one of the fundamental technologies that is helping us drive this transformation. With proper leveraging of the technology, we can boost productivity, gain efficiency and provide a good user experience to our customers.

The technology is evolving at a remarkable pace. There are multiple AI technology stacks and large language models already available that are built on huge masses of data, catering to different use cases. It is not just important that we choose a stack based on the speed and efficiency of development and deployment, but have frameworks in place for an accountable and responsible AI design. Understanding the data used to train these models, mindful data collection to further train these models, focus on bias mitigation in AI algorithms, transparency and explainability towards customers, and continuous learning and adaptation are some of the means to mitigate any potential risks connected to building systems powered by AI.

In conclusion, while AI brings forth numerous opportunities and benefits, it is essential to recognize and address the negative side of AI to ensure that its development and deployment align with human values, ethics, and societal well-being. Striking a balance between embracing AI’s potential and mitigating its risks is crucial for shaping a future where AI serves as a force for positive change.

neosfer GmbH

Eschersheimer Landstr 6

60322 Frankfurt am Main

Teil der Commerzbank Gruppe

+49 69 71 91 38 7 – 0 info@neosfer.de presse@neosfer.de bewerbung@neosfer.de